In concept, synthetic intelligence needs to be nice at serving to out. “Our job is sample recognition,” says Andrew Norgan, a pathologist and medical director of the Mayo Clinic’s digital pathology platform. “We have a look at the slide and we collect items of knowledge which have been confirmed to be vital.”

Visible evaluation is one thing that AI has gotten fairly good at for the reason that first picture recognition fashions started taking off practically 15 years in the past. Regardless that no mannequin will probably be good, you may think about a strong algorithm sometime catching one thing {that a} human pathologist missed, or not less than rushing up the method of getting a analysis. We’re beginning to see numerous new efforts to construct such a mannequin—not less than seven makes an attempt within the final yr alone—however all of them stay experimental. What’s going to it take to make them ok for use in the actual world?

Particulars concerning the newest effort to construct such a mannequin, led by the AI well being firm Aignostics with the Mayo Clinic, had been published on arXiv earlier this month. The paper has not been peer-reviewed, but it surely reveals a lot concerning the challenges of bringing such a device to actual medical settings.

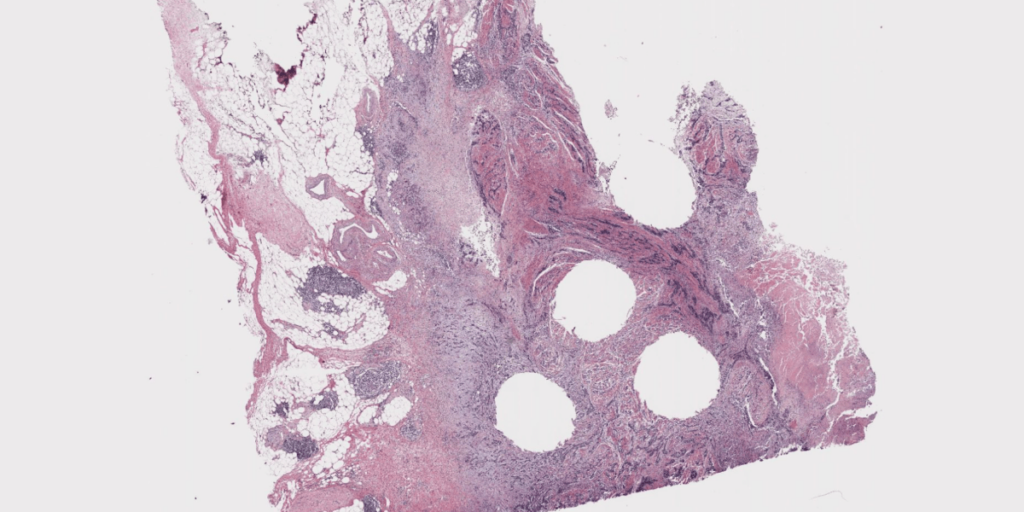

The mannequin, referred to as Atlas, was skilled on 1.2 million tissue samples from 490,000 instances. Its accuracy was examined in opposition to six different main AI pathology fashions. These fashions compete on shared exams like classifying breast most cancers pictures or grading tumors, the place the mannequin’s predictions are in contrast with the proper solutions given by human pathologists. Atlas beat rival fashions on six out of 9 exams. It earned its highest rating for categorizing cancerous colorectal tissue, reaching the identical conclusion as human pathologists 97.1% of the time. For an additional activity, although—classifying tumors from prostate most cancers biopsies—Atlas beat the opposite fashions’ excessive scores with a rating of simply 70.5%. Its common throughout 9 benchmarks confirmed that it bought the identical solutions as human consultants 84.6% of the time.

Let’s take into consideration what this implies. One of the best ways to know what’s occurring to cancerous cells in tissues is to have a pattern examined by a pathologist, in order that’s the efficiency that AI fashions are measured in opposition to. The most effective fashions are approaching people particularly detection duties however lagging behind in lots of others. So how good does a mannequin must be to be clinically helpful?

“Ninety % might be not ok. You’ll want to be even higher,” says Carlo Bifulco, chief medical officer at Windfall Genomics and co-creator of GigaPath, one of many different AI pathology fashions examined within the Mayo Clinic examine. However, Bifulco says, AI fashions that don’t rating completely can nonetheless be helpful within the quick time period, and will doubtlessly assist pathologists pace up their work and make diagnoses extra rapidly.

What obstacles are getting in the best way of higher efficiency? Downside primary is coaching knowledge.

“Fewer than 10% of pathology practices within the US are digitized,” Norgan says. Which means tissue samples are positioned on slides and analyzed underneath microscopes, after which saved in large registries with out ever being documented digitally. Although European practices are typically extra digitized, and there are efforts underway to create shared knowledge units of tissue samples for AI fashions to coach on, there’s nonetheless not a ton to work with.