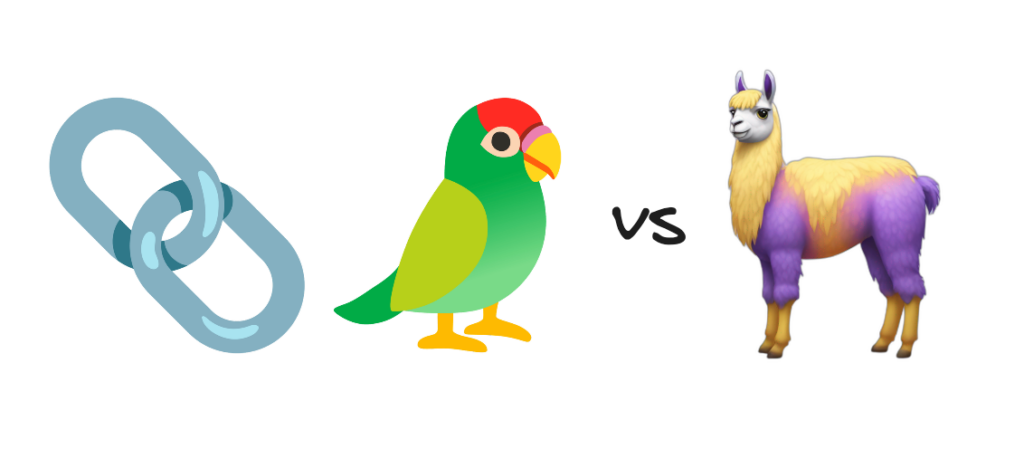

Massive Language Fashions (LLMs) are actually extensively accessible for primary chatbot based mostly utilization, however integrating them into extra complicated functions might be troublesome. Fortunate for builders, there are instruments that streamline the combination of LLMs to functions, two of probably the most outstanding being LangChain and LlamaIndex.

These two open-source frameworks bridge the hole between the uncooked energy of LLMs and sensible, user-ready apps – every providing a novel set of instruments supporting builders of their work with LLMs. These frameworks streamline key capabilities for builders, similar to RAG workflows, information connectors, retrieval, and querying strategies.

On this article, we’ll discover the needs, options, and strengths of LangChain and LlamaIndex, offering steering on when every framework excels. Understanding the variations will provide help to make the best selection in your LLM-powered functions.

Overview of Every Framework:

LangChain

Core Function & Philosophy:

LangChain was created to simplify the event of functions that depend on massive language fashions by offering abstractions and instruments to construct complicated chains of operations that may leverage LLMs successfully. Its philosophy facilities round constructing versatile, reusable elements that make it straightforward for builders to create intricate LLM functions while not having to code each interplay from scratch. LangChain is especially suited to functions requiring dialog, sequential logic, or complicated process flows that want context-aware reasoning.

Learn Extra About: LangChain Tutorial

Structure

LangChain’s structure is modular, with every part constructed to work independently or collectively as half of a bigger workflow. This modular strategy makes it straightforward to customise and scale, relying on the wants of the appliance. At its core, LangChain leverages chains, brokers, and reminiscence to supply a versatile construction that may deal with something from easy Q&A techniques to complicated, multi-step processes.

Key Options

Doc loaders in LangChain are pre-built loaders that present a unified interface to load and course of paperwork from totally different sources and codecs together with PDFs, HTML, txt, docx, csv, and so on. For instance, you possibly can simply load a PDF doc utilizing the PyPDFLoader, scrape internet content material utilizing the WebBaseLoader, or hook up with cloud storage companies like S3. This performance is especially helpful when constructing functions that have to course of a number of information sources, similar to doc Q&A techniques or information bases.

from langchain.document_loaders import PyPDFLoader, WebBaseLoader

# Loading a PDF

pdf_loader = PyPDFLoader("doc.pdf")

pdf_docs = pdf_loader.load()

# Loading internet content material

web_loader = WebBaseLoader("https://nanonets.com")

web_docs = web_loader.load()

Textual content splitters deal with the chunking of paperwork into manageable contextually aligned items. This can be a key precursor to correct RAG pipelines. LangChain gives numerous splitting methods for instance the RecursiveCharacterTextSplitter, which splits textual content whereas trying to take care of inter-chunk context and semantic which means. You may configure chunk sizes and overlap to stability between context preservation and token limits.

from langchain.text_splitter import RecursiveCharacterTextSplitter

splitter = RecursiveCharacterTextSplitter(

chunk_size=1000,

chunk_overlap=200,

separators=["nn", "n", " ", ""]

)

chunks = splitter.split_documents(paperwork)Immediate templates assist in standardizing prompts for numerous duties, making certain consistency throughout interactions. LangChain lets you outline these reusable templates with variables that may be stuffed dynamically, which is a strong function for creating constant however customizable prompts. This consistency means your utility will probably be simpler to take care of and replace when obligatory. An excellent approach to make use of inside your templates is ‘few-shot’ prompting, in different phrases, together with examples (optimistic and detrimental).

from langchain.prompts import PromptTemplate

# Outline a few-shot template with optimistic and detrimental examples

template = PromptTemplate(

input_variables=["topic", "context"],

template="""Write a abstract about {subject} contemplating this context: {context}

Examples:

### Optimistic Instance 1:

Matter: Local weather Change

Context: Latest analysis on the impacts of local weather change on polar ice caps

Abstract: Latest research present that polar ice caps are melting at an accelerated charge as a result of rising world temperatures. This melting contributes to rising sea ranges and impacts ecosystems reliant on ice habitats.

### Optimistic Instance 2:

Matter: Renewable Power

Context: Advances in photo voltaic panel effectivity

Abstract: Improvements in photo voltaic expertise have led to extra environment friendly panels, making photo voltaic power a extra viable and cost-effective various to fossil fuels.

### Detrimental Instance 1:

Matter: Local weather Change

Context: Impacts of local weather change on polar ice caps

Abstract: Local weather change is occurring all over the place and has results on the whole lot. (This abstract is imprecise and lacks element particular to polar ice caps.)

### Detrimental Instance 2:

Matter: Renewable Power

Context: Advances in photo voltaic panel effectivity

Abstract: Renewable power is sweet as a result of it helps the atmosphere. (This abstract is overly common and misses specifics about photo voltaic panel effectivity.)

### Now, based mostly on the subject and context supplied, generate an in depth, particular abstract:

Matter: {subject}

Context: {context}

Abstract:"""

)

# Format the immediate with a brand new instance

immediate = template.format(subject="AI", context="Latest developments in machine studying")

print(immediate)LCEL represents the trendy strategy to constructing chains in LangChain, providing a declarative method to compose LangChain elements. It is designed for production-ready functions from the beginning, supporting the whole lot from easy prompt-LLM mixtures to complicated multi-step chains. LCEL gives built-in streaming help for optimum time-to-first-token, computerized parallel execution of unbiased steps, and complete tracing by LangSmith. This makes it notably beneficial for manufacturing deployments the place efficiency, reliability, and observability are obligatory. For instance, you possibly can construct a retrieval-augmented era (RAG) pipeline that streams outcomes as they’re processed, handles retries mechanically, and gives detailed logging of every step.

from langchain.chat_models import ChatOpenAI

from langchain.prompts import ChatPromptTemplate

from langchain.schema.output_parser import StrOutputParser

# Easy LCEL chain

immediate = ChatPromptTemplate.from_messages([

("system", "You are a helpful assistant."),

("user", "{input}")

])

chain = immediate | ChatOpenAI() | StrOutputParser()

# Stream the outcomes

for chunk in chain.stream({"enter": "Inform me a narrative"}):

print(chunk, finish="", flush=True)

Chains are one in all LangChain’s strongest options, permitting builders to create subtle workflows by combining a number of operations. A sequence would possibly begin with loading a doc, then summarizing it, and eventually answering questions on it. Chains are primarily created utilizing LCEL (LangChain Execution Language). This software makes it simple to each assemble customized chains and use ready-made, off-the-shelf chains.

There are a number of prebuilt LCEL chains accessible:

- create_stuff_document_chain: Use while you need to format an inventory of paperwork right into a single immediate for the LLM. Guarantee it matches throughout the LLM’s context window as all paperwork are included.

- load_query_constructor_runnable: Generates queries by changing pure language into allowed operations. Specify an inventory of operations earlier than utilizing this chain.

- create_retrieval_chain: Passes a person inquiry to a retriever to fetch related paperwork. These paperwork and the unique enter are then utilized by the LLM to generate a response.

- create_history_aware_retriever: Takes in dialog historical past and makes use of it to generate a question, which is then handed to a retriever.

- create_sql_query_chain: Appropriate for producing SQL database queries from pure language.

Legacy Chains: There are additionally a number of chains accessible from earlier than LCEL was developed. For instance, SimpleSequentialChain, and LLMChain.

from langchain.chains import SimpleSequentialChain, LLMChain

from langchain.llms import OpenAI

import os

os.environ['OPENAI_API_KEY'] = "YOUR_API_KEY"

llm=OpenAI(temperature=0)

summarize_chain = LLMChain(llm=llm, immediate=summarize_template)

categorize_chain = LLMChain(llm=llm, immediate=categorize_template)

full_chain = SimpleSequentialChain(

chains=[summarize_chain, categorize_chain],

verbose=True

)

Brokers symbolize a extra autonomous strategy to process completion in LangChain. They will make choices about which tools to make use of based mostly on person enter and may execute multi-step plans to attain objectives. Brokers can entry numerous instruments like engines like google, calculators, or customized APIs, and so they can determine how you can use these instruments in response to person requests. For example, an agent would possibly assist with analysis by looking out the net, summarizing findings, and formatting the outcomes. LangChain has a number of types of agents together with Software Calling, OpenAI Instruments/Features, Structured Chat, JSON Chat, ReAct, and Self Ask with Search.

from langchain.brokers import create_react_agent, Software

from langchain.instruments import DuckDuckGoSearchRun

search = DuckDuckGoSearchRun()

instruments = [

Tool(

name="Search",

func=search.run,

description="useful for searching information online"

)

]

agent = create_react_agent(instruments, llm, immediate)

Reminiscence techniques in LangChain allow functions to take care of context throughout interactions. This permits the creation of coherent conversational experiences or sustaining of state in long-running processes. LangChain affords numerous reminiscence sorts, from easy dialog buffers to extra subtle trimming and summary-based reminiscence techniques. For instance, you possibly can use dialog reminiscence to take care of context in a customer support chatbot, or entity reminiscence to trace particular particulars about customers or matters over time.

There are various kinds of reminiscence in LangChain, relying on the extent of retention and complexity:

- Primary Reminiscence Setup: For a primary reminiscence strategy, messages are handed immediately into the mannequin immediate. This easy type of reminiscence makes use of the newest dialog historical past as context for responses, permitting the mannequin to reply with regards to latest exchanges. ‘conversationbuffermemory’ is an efficient instance of this.

- Summarized Reminiscence: For extra complicated situations, summarized reminiscence distills earlier conversations into concise summaries. This strategy can enhance efficiency by changing verbose historical past with a single abstract message, which maintains important context with out overwhelming the mannequin. A abstract message is generated by prompting the mannequin to condense the total chat historical past, which might then be up to date as new interactions happen.

- Automated Reminiscence Administration with LangGraph: LangChain’s LangGraph allows computerized reminiscence persistence through the use of checkpoints to handle message historical past. This technique permits builders to construct chat functions that mechanically bear in mind conversations over lengthy periods. Utilizing the MemorySaver checkpointer, LangGraph functions can preserve a structured reminiscence with out exterior intervention.

- Message Trimming: To handle reminiscence effectively, particularly when coping with restricted mannequin context, LangChain affords the trim_messages utility. This utility permits builders to maintain solely the latest interactions by eradicating older messages, thereby focusing the chatbot on the newest context with out overloading it.

from langchain.reminiscence import ConversationBufferMemory

from langchain.chains import ConversationChain

reminiscence = ConversationBufferMemory()

dialog = ConversationChain(

llm=llm,

reminiscence=reminiscence,

verbose=True

)

# Reminiscence maintains context throughout interactions

dialog.predict(enter="Hello, I am John")

dialog.predict(enter="What's my title?") # Will bear in mind "John"LangChain is a extremely modular, versatile framework that simplifies constructing functions powered by massive language fashions by well-structured elements. With its many options—doc loaders, customizable immediate templates, and superior reminiscence administration—LangChain permits builders to deal with complicated workflows effectively. This makes LangChain splendid for functions that require nuanced management over interactions, process flows, or conversational state. Subsequent, we’ll study LlamaIndex to see the way it compares!

LlamaIndex

Core Function & Philosophy:

LlamaIndex is a framework designed particularly for environment friendly information indexing, retrieval, and querying to reinforce interactions with massive language fashions. Its core function is to attach LLMs with unstructured information, making it straightforward for functions to retrieve related info from huge datasets. The philosophy behind LlamaIndex is centered round creating versatile, scalable information indexing options that enable LLMs to entry related information on-demand, which is especially helpful for functions centered on doc retrieval, search, and Q&A techniques.

Learn Extra About: Llamaindex Tutorial

Structure

LlamaIndex’s structure is optimized for retrieval-heavy functions, with an emphasis on information indexing, versatile querying, and environment friendly reminiscence administration. Its structure consists of Nodes, Retrievers, and Question Engines, every designed to deal with particular features of information processing. Nodes deal with information ingestion and structuring, retrievers facilitate information extraction, and question engines streamline querying workflows, all of which work in tandem to supply quick and dependable entry to saved information. LlamaIndex’s structure allows it to attach seamlessly with vector databases, enabling scalable and high-speed doc retrieval.

Key Options

Paperwork and Nodes are information storage and structuring items in LlamaIndex that break down massive datasets into smaller, manageable elements. Nodes enable information to be listed for fast retrieval, with customizable chunking methods for numerous doc sorts (e.g., PDFs, HTML, or CSV recordsdata). Every Node additionally holds metadata, making it potential to filter and prioritize information based mostly on context. For instance, a Node would possibly retailer a chapter of a doc together with its title, writer, and subject, which helps LLMs question with greater relevance.

from llama_index.core.schema import TextNode, Doc

from llama_index.core.node_parser import SimpleNodeParser

# Create nodes manually

text_node = TextNode(

textual content="LlamaIndex is a knowledge framework for LLM functions.",

metadata={"supply": "documentation", "subject": "introduction"}

)

# Create nodes from paperwork

parser = SimpleNodeParser.from_defaults()

paperwork = [

Document(text="Chapter 1: Introduction to LLMs"),

Document(text="Chapter 2: Working with Data")

]

nodes = parser.get_nodes_from_documents(paperwork)

Retrievers are chargeable for querying the listed information and returning related paperwork to the LLM. LlamaIndex gives numerous retrieval strategies, together with conventional keyword-based search, dense vector-based retrieval for semantic search, and hybrid retrieval that mixes each. This flexibility permits builders to pick or mix retrieval strategies based mostly on their utility’s wants. Retrievers might be built-in with vector databases like FAISS or KDB.AI for high-performance, large-scale search capabilities.

from llama_index.core import VectorStoreIndex, SimpleDirectoryReader

from llama_index.core.retrievers import VectorIndexRetriever

# Create an index

paperwork = SimpleDirectoryReader('.').load_data()

index = VectorStoreIndex.from_documents(paperwork)

# Vector retriever

vector_retriever = VectorIndexRetriever(

index=index,

similarity_top_k=2

)

# Retrieve nodes

question = "What's LlamaIndex?"

vector_nodes = vector_retriever.retrieve(question)

print(f"Vector Outcomes: {[node.text for node in vector_nodes]}")Question Engines act because the interface between the appliance and the listed information, dealing with and optimizing search queries to ship probably the most related outcomes. They help superior querying choices similar to key phrase search, semantic similarity search, and customized filters, permitting builders to create subtle, contextualized search experiences. Question engines are adaptable, supporting parameter tuning to refine search accuracy and relevance, and making it potential to combine LLM-driven functions immediately with information sources.

from llama_index.core import VectorStoreIndex, SimpleDirectoryReader, Settings

from llama_index.llms.openai import OpenAI

from llama_index.core.node_parser import SentenceSplitter

import os

os.environ['OPENAI_API_KEY'] = "YOUR_API_KEY"

GENERATION_MODEL = 'gpt-4o-mini'

llm = OpenAI(mannequin=GENERATION_MODEL)

Settings.llm = llm

# Create an index

paperwork = SimpleDirectoryReader('.').load_data()

index = VectorStoreIndex.from_documents(paperwork, transformations=[SentenceSplitter(chunk_size=2048, chunk_overlap=0)],)

query_engine = index.as_query_engine()

response = query_engine.question("What's LlamaIndex?")

print(response)

LlamaIndex affords information connectors that enable for seamless ingestion from various information sources, together with databases, file techniques, and cloud storage. Connectors deal with information extraction, processing, and chunking, enabling functions to work with massive, complicated datasets with out guide formatting. That is particularly useful for functions requiring multi-source information fusion, like information bases or in depth doc repositories.

Different specialised information connectors can be found on LlamaHub, a centralized repository throughout the LlamaIndex framework. These are prebuilt connectors inside a unified and constant interface that builders can use to combine and pull in information from numerous sources. Through the use of LlamaHub, builders can shortly arrange information pipelines that join their functions to exterior information sources while not having to construct customized integrations from scratch.

LlamaHub can be open-source, so it’s open to group contributions and new connectors and enhancements are ceaselessly added.

LlamaIndex permits for the creation of superior indexing buildings, similar to vector indexes, and hierarchical or graph-based indexes, to go well with various kinds of information and queries. Vector indexes allow semantic similarity search, hierarchical indexes enable for organized, tree-like layered indexing, whereas graph indexes seize relationships between paperwork or sections, enhancing retrieval for complicated, interconnected datasets. These indexing choices are perfect for functions that have to retrieve extremely particular info or navigate complicated datasets, similar to analysis databases or document-heavy workflows.

from llama_index.core import VectorStoreIndex, SimpleDirectoryReader

# Load paperwork and construct index

paperwork = SimpleDirectoryReader("../../path_to_directory").load_data()

index = VectorStoreIndex.from_documents(paperwork)With LlamaIndex, information might be filtered based mostly on metadata, like tags, timestamps, or different contextual info. This filtering allows exact retrieval, particularly in circumstances the place information segmentation is required, similar to filtering outcomes by class, recency, or relevance.

from llama_index.core import VectorStoreIndex, Doc

from llama_index.core.retrievers import VectorIndexRetriever

from llama_index.core.vector_stores import MetadataFilters, ExactMatchFilter

# Create paperwork with metadata

doc1 = Doc(textual content="LlamaIndex introduction.", metadata={"subject": "introduction", "date": "2024-01-01"})

doc2 = Doc(textual content="Superior indexing strategies.", metadata={"subject": "indexing", "date": "2024-01-05"})

doc3 = Doc(textual content="Utilizing metadata filtering.", metadata={"subject": "metadata", "date": "2024-01-10"})

# Create and construct an index with paperwork

index = VectorStoreIndex.from_documents([doc1, doc2, doc3])

# Outline metadata filters, filter on the ‘date’ metadata column

filters = MetadataFilters(filters=[ExactMatchFilter(key="date", value="2024-01-05")])

# Arrange the vector retriever with the outlined filters

vector_retriever = VectorIndexRetriever(index=index, filters=filters)

# Retrieve nodes

question = "environment friendly indexing"

vector_nodes = vector_retriever.retrieve(question)

print(f"Vector Outcomes: {[node.text for node in vector_nodes]}")

>>> Vector Outcomes: ['Advanced indexing techniques.']When to Select Every Framework

LangChain Major Focus

Advanced Multi-Step Workflows

LangChain’s core energy lies in orchestrating subtle workflows that contain a number of interacting elements. Trendy LLM functions usually require breaking down complicated duties into manageable steps that may be processed sequentially or in parallel. LangChain gives a strong framework for chaining operations whereas sustaining clear information move and error dealing with, making it splendid for techniques that want to collect, course of, and synthesize info throughout a number of steps.

Key capabilities:

- LCEL for declarative workflow definition

- Constructed-in error dealing with and retry mechanisms

Intensive Agent Capabilities

The agent system in LangChain allows autonomous decision-making in LLM functions. Fairly than following predetermined paths, brokers dynamically select from accessible instruments and adapt their strategy based mostly on intermediate outcomes. This makes LangChain notably beneficial for functions that have to deal with unpredictable person requests or navigate complicated choice bushes, similar to analysis assistants or superior customer support techniques.

Widespread agent tools:

Custom tool creation for particular domains and use-cases

Reminiscence Administration

LangChain’s strategy to reminiscence administration solves the problem of sustaining context and state throughout interactions. The framework gives subtle reminiscence techniques that may observe dialog historical past, preserve entity relationships, and retailer related context effectively.

LlamaIndex Major Focus

Superior Information Retrieval

LlamaIndex excels in making massive quantities of customized information accessible to LLMs effectively. The framework gives subtle indexing and retrieval mechanisms that transcend easy vector similarity searches, understanding the construction and relationships inside your information. This turns into notably beneficial when coping with massive doc collections or technical documentation that require exact retrieval. For instance, in coping with massive libraries of economic paperwork, retrieving the best info is a should.

Key retrieval options:

- A number of retrieval methods (vector, key phrase, hybrid)

- Customizable relevance scoring (measure if question was truly answered by the techniques response)

RAG Purposes

Whereas LangChain may be very succesful for RAG pipelines, LlamaIndex additionally gives a complete suite of instruments particularly designed for Retrieval-Augmented Technology functions. The framework handles complicated duties of doc processing, chunking, and retrieval optimization, permitting builders to deal with constructing functions somewhat than managing RAG implementation particulars.

RAG optimizations:

- Superior chunking methods

- Context window administration

- Response synthesis strategies

- Reranking

Learn About: How to Build RAG App?

Making the Selection

The choice between frameworks usually is determined by your utility’s major complexity:

- Select LangChain when your focus is on course of orchestration, agent habits, and complicated workflows

- Select LlamaIndex when your precedence is information group, retrieval, and RAG implementation

- Think about using each frameworks collectively for functions requiring each subtle workflows and superior information dealing with

It’s also essential to recollect, in lots of circumstances, both of those frameworks will be capable of full your process. They every have their strengths, however for primary use-cases similar to a naive RAG workflow, both LangChain or LlamaIndex will do the job. In some circumstances, the principle figuring out issue is likely to be which framework you’re most snug working with.

Can I Use Each Collectively?

Sure, you possibly can certainly use each LangChain and LlamaIndex collectively. This mix of frameworks can present a strong basis for constructing production-ready LLM functions that deal with each course of and information complexity successfully. By integrating the 2 frameworks, you possibly can leverage the strengths of every and create subtle functions that seamlessly index, retrieve, and work together with in depth info in response to person queries.

An instance of this integration might be wrapping LlamaIndex performance like indexing or retrieval inside a customized LangChain agent. This could capitalize on the indexing or retrieval strengths of LlamaIndex, with the orchestration and agentic strengths of LangChain.

Abstract Desk:

Conclusion

Selecting between LangChain and LlamaIndex is determined by aligning every framework’s strengths along with your utility’s wants. LangChain excels at orchestrating complicated workflows and agent habits, making it splendid for dynamic, context-aware functions with multi-step processes. LlamaIndex, in the meantime, is optimized for information dealing with, indexing, and retrieval, excellent for functions requiring exact entry to structured and unstructured information, similar to RAG pipelines.

For process-driven workflows, LangChain is probably going one of the best match, whereas LlamaIndex is right for superior information retrieval strategies. Combining each frameworks can present a strong basis for functions needing subtle workflows and strong information dealing with, streamlining growth and enhancing AI options.