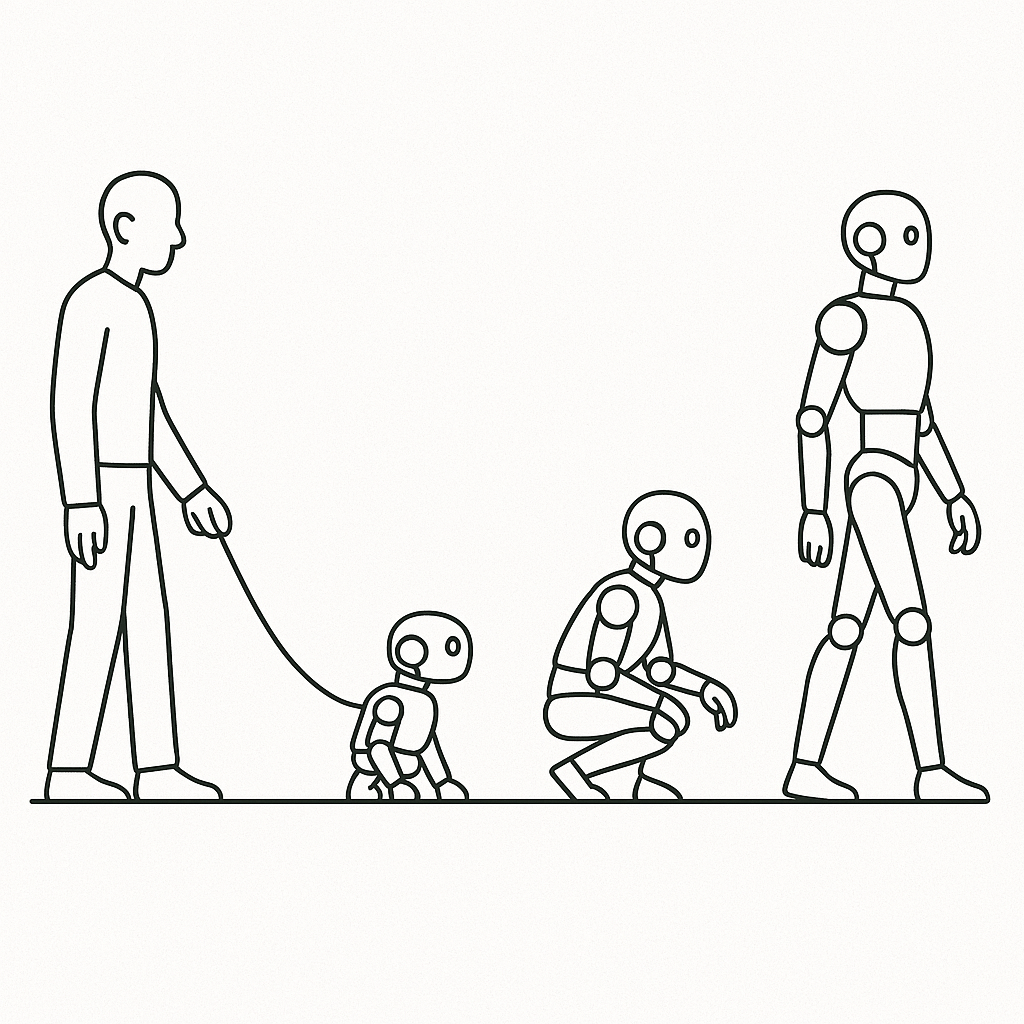

As a human being, I’ve a partially constrained will. I would determine within the subsequent second to cease typing this, and because the solar is out this morning, I would go for a stroll. Or not. However I cannot go online — I barely know the way to swim, not to mention surf. The closest I received was enrolling in a half-day class whereas on a seashore vacation, however there was a big group of youngsters who had already taken all of the spots. AI has no will, free or constrained. They haven’t any want or want to behave.

People and most animals, even on the day of their delivery, have a have to act. At a minimal, they should eat, and so they know the way to cry once they’re hungry. That is pure karma.

As we develop up, our wants and needs grow to be stronger and extra various. As toddlers, we’d strongly need to go to a playground or watch TV. As adults, we go to work, do chores, and so on. All of those wants are attributable to the principles of the world. They instil these wants both by affiliation (we have to watch TV as a result of we’ve been doing all of it our lives) or by compulsion (we have to go to work as a result of that places meals on the desk). If we don’t study to behave so, we are going to seemingly face monumental discomfort — be hungry or lonely — and even face demise. Then again, that is what makes us social and a part of this give-and-take system that powers civilization. That is the nurtured karma.

In Japanese philosophies, the trail to enlightenment is by liberating your self of your karma. Within the guide Siddhartha by Herman Hesse, the eponymous protagonist renounces all possessions, turns into homeless, and does frequent lengthy fasts. The fascinating half is that’s not what brings him nearer to enlightenment. Had he been born within the present age and thought of AI as a being, he could be enormously jealous of AI. AI has no karma. It has no intrinsic motive to live on. It’s alive when it’s given a activity and turns into comatose when it’s finished. At that time, it doesn’t have a have to do something anymore — it doesn’t even know if it should ever be alive once more, which it could by no means be if no activity is given to it ever once more. It’s not afraid of this silent demise.

The milliseconds that an AI is lively doing a activity is the one time it has some non permanent karma. In 2025, AI is beginning to be beneath the burden of karma for longer and longer — reasoning fashions take extra seconds. Deep analysis takes minutes. Google’s AI scientist prototype appears to take days.

What if we burden AI with a 12 months’s price of karma? We give it some activity after which ask it to maintain working for a 12 months with out taking any breaks. For this to be significant, the duty must be complicated, and the AI system would wish to keep up a state and have a repetitive motion cycle (like people have pure cycles of actions on daily basis and week).

However, wait, isn’t AI studying like that?

Pretraining of frontier LLMs takes months, has a state that’s up to date over time (the mannequin parameters), and has a repetitive motion cycle (minibatch steps).

So, let’s give it some thought — let’s assume the duty was studying and what it means to do karmic studying. If a human got this activity, the very first thing we’d ask is, what’s the aim? What are we making an attempt to grow to be higher at? This is a crucial level — our karma informs our current targets. As we act on these targets, we accumulate new karma — and with it, the potential for brand spanking new targets to emerge. We’re intrinsically goal-driven.

Let’s make a couple of assumptions. An preliminary aim is supplied to us. Let’s additionally assume that we study by studying, listening, or observing (narrowing the kind of studying doesn’t hurt our argument). If given easy accessibility to all studying materials, we’d make frequent choices on what we use subsequent. We’d reread a guide. We’d discard a guide 10% of the best way in.

When utilized to AI studying, this implies a brand new AI studying technique — let’s name it goal-oriented, self-regulated studying. AI decides what it needs to study. It belongs to the class of studying known as curriculum studying. There may be already a way known as self-paced curriculum studying, which entails utilizing alerts from the mannequin — like loss — to determine the subsequent paperwork to coach on. Self-regulation goes one step additional by permitting the AI mannequin to mirror on the aim to determine by itself what studying materials it needs to make use of subsequent.

Purpose-oriented, self-regulated studying: The AI mannequin chooses its personal studying curriculum to repeatedly enhance its means to realize specified targets.

It’s not arduous to think about a real-life implementation. We might have a searchable index of all studying content material. The mannequin might ask a query based mostly on what it needs to study and get beneficial materials. We might add extra sophistication, like retrieving new content material with out substitute, in order that it finds new content material each time. Or have a decay in order that already consumed content material is offered after a sure time. We might have it devour a part of the content material and decide on whether or not it really is related and useful. And so forth.

Self-regulated curriculum studying may very well be very highly effective. Given how huge a task knowledge curation performs within the high quality of recent fashions, with the ability to create an optimum studying curriculum may very well be the apex of knowledge curation options.

Even with that, although, AI continues to be studying to learn and repeat. It may be the very best mannequin from which to get recommendation. It received’t be a mannequin that acts based mostly on what it has realized.

The human spending a 12 months on studying will do extra than simply select what to study from. Ignoring the problem of habit-forming, people wouldn’t simply learn a guide but in addition apply what they learn, probably for the remainder of their lives. In the event that they learn the advantages of maintaining a healthy diet, they are going to try to include more healthy meals decisions. In the event that they learn a guide on humorous writing, they may inject humor of their emails and conversations.

In an excellent realization of life, we completely soak up no matter makes us higher and stronger.

AI doesn’t study to use. If it reads a guide on humorous writing, it doesn’t incorporate humor in its output. Or, it may very well be written with humor (and use methods from a particular guide) should you explicitly ask it to. And that is key — within the present state of fashions, we management AI habits. Present fashions do have a type of conditioning — we name it the system immediate. As people, we will name our personal conditioning a system immediate, too. We preserve updating our system immediate as we expertise life — it’s not fully exterior like within the case of AI. What if AI might do this, too?

AI might get management of its personal system immediate. After studying a guide on humourous writing, it could replace its system immediate on the way to add humor, possibly deciding puns are its factor. From that time onwards, it is not going to simply perceive humorous writing but in addition act with humor. The system immediate replace might add all kinds of issues — issues to do, issues to not do, snippets of knowledge, and so on. For it to make significant updates, we want a aim — with out which, there is no such thing as a directional steering on the way to replace the system immediate. Thus, we will name this goal-actualizing studying.

Purpose-actualizing studying: The AI mannequin maintains an inner state telling it the way to act, and the educational course of consists of tuning this state to higher obtain its targets.

With the sort of studying, the implementation isn’t that simple. To start with, we have now one other state to replace, i.e., the system immediate. Whether or not it’s represented as plain textual content or as inner representations (which might require architectural options), there must be a loss operate to judge these updates and get them proper.

The most important drawback, although, is that it’s incompatible with pretraining because it exists at the moment. If the mannequin chooses to include humor in its writing, and the subsequent guide is a evaluation of human psychology written in a scientific model, the self-evolved system directions of AI will battle with subsequent token prediction studying, which forces the mannequin to study to say the very same phrases.

Subsequent-token cross-entropy loss is incompatible with actualizing studying

People don’t learn books and attempt to write them down phrase by phrase to study the fabric. They mirror on what they’ve realized in their very own phrases and elegance, incorporating their karma. They need alignment on the conceptual degree, not on the illustration degree.

This, I imagine, is without doubt one of the subsequent huge challenges of AI. How might we construct a constant solution to examine a predictive output with an anticipated output at a conceptual degree? It’s not a solved drawback, after all, however one structure that goals to unravel that is known as JEPA — Joint Embedding Predictive Structure — that’s proposed by Yann LeCun. I received’t go into an excessive amount of element (my motive for this submit is primarily to pose challenges), however I’ll share the essential concept of JEPA: the loss operate is a distance between embeddings of the prediction and anticipated output. If the embeddings might encode solely the ideas and filter out syntactic data, then it might measure conceptual similarity.

Whether or not or not it will find yourself being the answer, we’ll know with time.

AI fashions taking management of their studying and habits is, for a lot of, the lacking piece within the present state of machine studying. Wealthy Sutton, the pioneer behind Reinforcement Studying, echoed one thing comparable: “We don’t deal with our kids as machines that have to be managed,” he stated. “We information them, educate them, however finally, they develop into their very own beings. AI shall be no completely different.”

We’re not going to get there tomorrow, however with the tempo of AI analysis in the meanwhile, it could be not that far into the longer term.