1. Introduction

Ever for the reason that introduction of the self-attention mechanism, Transformers have been the best choice relating to Pure Language Processing (NLP) duties. Self-attention-based fashions are extremely parallelizable and require considerably fewer parameters, making them way more computationally environment friendly, much less vulnerable to overfitting, and simpler to fine-tune for domain-specific duties [1]. Moreover, the important thing benefit of transformers over previous fashions (like RNN, LSTM, GRU and different neural-based architectures that dominated the NLP area previous to the introduction of Transformers) is their skill to course of enter sequences of any size with out dropping context, by way of the usage of the self-attention mechanism that focuses on completely different elements of the enter sequence, and the way these elements work together with different elements of the sequence, at completely different instances [2]. Due to these qualities, Transformers has made it potential to coach language fashions of unprecedented dimension, with greater than 100B parameters, paving the best way for the present state-of-the-art superior fashions just like the Generative Pre-trained Transformer (GPT) and the Bidirectional Encoder Representations from Transformers (BERT) [1].

Nonetheless, within the area of laptop imaginative and prescient, convolutional neural networks or CNNs, stay dominant in most, if not all, laptop imaginative and prescient duties. Whereas there was an growing assortment of analysis work that makes an attempt to implement self-attention-based architectures to carry out laptop imaginative and prescient duties, only a few has reliably outperformed CNNs with promising scalability [3]. The primary problem with integrating the transformer structure with image-related duties is that, by design, the self-attention mechanism, which is the core element of transformers, has a quadratic time complexity with respect to sequence size, i.e. O(n2), as proven in Desk I and as mentioned additional in Half 2.1. That is normally not an issue for NLP duties that use a comparatively small variety of tokens per enter sequence (e.g., a 1,000-word paragraph will solely have 1,000 enter tokens, or just a few extra if sub-word items are used as tokens as a substitute of full phrases). Nonetheless, in laptop imaginative and prescient, the enter sequence (the picture) can have a token dimension with orders of magnitude higher than that of NLP enter sequences. For instance, a comparatively small 300 x 300 x 3 picture can simply have as much as 270,000 tokens and require a self-attention map with as much as 72.9 billion parameters (270,0002) when self-attention is utilized naively.

Because of this, a lot of the analysis work that try to make use of self-attention-based architectures to carry out laptop imaginative and prescient duties did so both by making use of self-attention domestically, utilizing transformer blocks along side CNN layers, or by solely changing particular parts of the CNN structure whereas sustaining the general construction of the community; by no means by solely utilizing a pure transformer [3]. The purpose of Dr. Dosovitskiy, et. al. of their work, “An Picture is Value 16×16 Phrases: Transformers for Picture Recognition at Scale”, is to indicate that it’s certainly potential to implement picture classification by making use of self-attention globally by way of the usage of the essential Transformer encoder architure, whereas on the similar time requiring considerably much less computational sources to coach, and outperforming state-of-the-art convolutional neural networks like ResNet.

2. The Transformer

Transformers, launched within the paper titled “Consideration is All You Want” by Vaswani et al. in 2017, are a category of neural community architectures which have revolutionized varied pure language processing and machine studying duties. A excessive stage view of its structure is proven in Fig. 1.

and decoder parts (proper block) [2]

Since its introduction, transformers have served as the muse for a lot of state-of-the-art fashions in NLP; together with BERT, GPT, and extra. Essentially, they’re designed to course of sequential knowledge, equivalent to textual content knowledge, with out the necessity for recurrent or convolutional layers [2]. They obtain this by relying closely on a mechanism known as self-attention.

The self-attention mechanism is a key innovation launched within the paper that enables the mannequin to seize relationships between completely different parts in a given sequence by weighing the significance of every component within the sequence with respect to different parts [2]. Say as an illustration, you need to translate the next sentence:

“The animal didn’t cross the road as a result of it was too drained.”

What does the phrase “it” on this explicit sentence seek advice from? Is it referring to the road or the animal? For us people, this can be a trivial query to reply. However for an algorithm, this may be thought-about a posh process to carry out. Nonetheless, by way of the self-attention mechanism, the transformer mannequin is ready to estimate the relative weight of every phrase with respect to all the opposite phrases within the sentence, permitting the mannequin to affiliate the phrase “it” with “animal” within the context of our given sentence [4].

2.1. The Self-Consideration Mechanism

A transformer transforms a given enter sequence by passing every component by way of an encoder (or a stack of encoders) and a decoder (or a stack of decoders) block, in parallel [2]. Every encoder block accommodates a self-attention block and a feed ahead neural community. Right here, we solely concentrate on the transformer encoder block as this was the element utilized by Dosovitskiy et al. of their Imaginative and prescient Transformer picture classification mannequin.

As is the case with normal NLP purposes, step one within the encoding course of is to show every enter phrase right into a vector utilizing an embedding layer which converts our textual content knowledge right into a vector that represents our phrase within the vector house whereas retaining its contextual data. We then compile these particular person phrase embedding vectors right into a matrix X, the place every row i represents the embedding of every component i within the enter sequence. Then, we create three units of vectors for every component within the enter sequence; specifically, Key (Okay), Question (Q), and Worth (V). These units are derived by multiplying matrix X with the corresponding trainable weight matrices WQ, WK, and WV [2].

Afterwards, we carry out a matrix multiplication between Okay and Q, divide the outcome by the square-root of the dimensionality of Okay: ![]() …after which apply a softmax perform to normalize the output and generate weight values between 0 and 1 [2].

…after which apply a softmax perform to normalize the output and generate weight values between 0 and 1 [2].

We are going to name this middleman output the consideration issue. This issue, proven in Eq. 4, represents the load that every component within the sequence contributes to the calculation of the eye worth on the present place (phrase being processed). The concept behind the softmax operation is to amplify the phrases that the mannequin thinks are related to the present place, and attenuate those which might be irrelevant. For instance, in Fig. 3, the enter sentence “He later went to report Malaysia for one yr” is handed right into a BERT encoder unit to generate a heatmap that illustrates the contextual relationship of every phrase with one another. We will see that phrases which might be deemed contextually related produce greater weight values of their respective cells, visualized in a darkish pink coloration, whereas phrases which might be contextually unrelated have low weight values, represented in pale pink.

Lastly, we multiply the eye issue matrix to the worth matrix V to compute the aggregated self-attention worth matrix Z of this layer [2], the place every row i in Z represents the eye vector for phrase i in our enter sequence. This aggregated worth basically bakes the “context” supplied by different phrases within the sentence into the present phrase being processed. The eye equation proven in Eq. 5 is typically additionally known as the Scaled Dot-Product Consideration.

2.2 The Multi-Headed Self-Consideration

Within the paper by Vaswani et. al., the self-attention block is additional augmented with a mechanism generally known as the “multi-headed” self-attention, proven in Fig 4. The concept behind that is as a substitute of counting on a single consideration mechanism, the mannequin employs a number of parallel consideration “heads” (within the paper, Vaswani et. al. used 8 parallel consideration layers), whereby every of those consideration heads learns completely different relationships and supplies distinctive views on the enter sequence [2]. This improves the efficiency of the eye layer in two essential methods:

First, it expands the flexibility of the mannequin to concentrate on completely different positions throughout the sequence. Relying on a number of variations concerned within the initialization and coaching course of, the calculated consideration worth for a given phrase (Eq. 5) could be dominated by different sure unrelated phrases or phrases and even by the phrase itself [4]. By computing a number of consideration heads, the transformer mannequin has a number of alternatives to seize the proper contextual relationships, thus changing into extra sturdy to variations and ambiguities within the enter.Second, since every of our Q, Okay, V matrices are randomly initialized independently throughout all the eye heads, the coaching course of then yields a number of Z matrices (Eq. 5), which supplies the transformer a number of illustration subspaces [4]. For instance, one head may concentrate on syntactic relationships whereas one other may attend to semantic meanings. By means of this, the mannequin is ready to seize extra numerous relationships throughout the knowledge.

3. The Imaginative and prescient Transformer

The basic innovation behind the Imaginative and prescient Transformer (ViT) revolves round the concept that pictures could be processed as sequences of tokens slightly than grids of pixels. In conventional CNNs, enter pictures are analyzed as overlapping tiles by way of a sliding convolutional filter, that are then processed hierarchically by way of a collection of convolutional and pooling layers. In distinction, ViT treats the picture as a set of non-overlapping patches, that are handled because the enter sequence to a typical Transformer encoder unit.

derived from the Fig. 1 (proper)[3].

By defining the enter tokens to the transformer as non-overlapping picture patches slightly than particular person pixels, we’re subsequently in a position to scale back the dimension of the eye map from ⟮𝐻 𝓍 𝑊⟯2 to ⟮𝑛𝑝ℎ 𝓍 𝑛𝑝𝑤 ⟯2 given 𝑛𝑝ℎ ≪𝐻 and 𝑛𝑝𝑤≪ 𝑊; the place 𝐻 and 𝑊 are the peak and width of the picture, and 𝑛𝑝ℎ and 𝑛𝑝𝑙 are the variety of patches within the corresponding axes. By doing so, the mannequin is ready to deal with pictures of various sizes with out requiring in depth architectural modifications [3].

These picture patches are then linearly embedded into lower-dimensional vectors, just like the phrase embedding step that produces matrix X in Half 2.1. Since transformers don’t comprise recurrence nor convolutions, they lack the capability to encode positional data of the enter tokens and are subsequently permutation invariant [2]. Therefore, as it’s accomplished in NLP purposes, a positional embedding is appended to every linearly encoded vector previous to enter into the transformer mannequin, so as to encode the spatial data of the patches, making certain that the mannequin understands the place of every token relative to different tokens throughout the picture. Moreover, an additional learnable classifier cls embedding is added to the enter. All of those (the linear embeddings of every 16 x 16 patch, the additional learnable classifier embedding, and their corresponding positional embedding vectors) are handed by way of a typical Transformer encoder unit as mentioned in Half 2. The output equivalent to the added learnable cls embedding is then used to carry out classification by way of a typical MLP classifer head [3].

4. The Consequence

Within the paper, the 2 largest fashions, ViT-H/14 and ViT-L/16, each pre-trained on the JFT-300M dataset, are in comparison with state-of-the-art CNNs—as proven in Desk II, together with Large Switch (BiT), which employs supervised switch studying with giant ResNets, and Noisy Scholar, a big EfficientNet educated utilizing semi-supervised studying on ImageNet and JFT-300M with out labels [3]. On the time of this research’s publication, Noisy Scholar held the state-of-the-art place on ImageNet, whereas BiT-L on the opposite datasets utilized within the paper [3]. All fashions have been educated in TPUv3 {hardware}, and the variety of TPUv3-core-days that it took to coach every mannequin have been recorded.

We will see from the desk that Imaginative and prescient Transformer fashions pre-trained on the JFT-300M dataset outperforms ResNet-based baseline fashions on all datasets; whereas, on the similar time, requiring considerably much less computational sources (TPUv3-core-days) to pre-train. A secondary ViT-L/16 mannequin was additionally educated on a a lot smaller public ImageNet-21k dataset, and is proven to additionally carry out comparatively nicely whereas requiring as much as 97% much less computational sources in comparison with state-of-the-art counter elements [3].

Fig. 6 exhibits the comparability of the efficiency between the BiT and ViT fashions (measured utilizing the ImageNet Top1 Accuracy metric) throughout completely different pre-training datasets of various sizes. We see that the ViT-Massive fashions underperform in comparison with the bottom fashions on the small datasets like ImageNet, and roughly equal efficiency on ImageNet-21k. Nonetheless, when pre-trained on bigger datasets like JFT-300M, the ViT clearly outperforms the bottom mannequin [3].

Additional exploring how the scale of the dataset pertains to mannequin efficiency, the authors educated the fashions on varied random subsets of the JFT dataset—9M, 30M, 90M, and the total JFT-300M. Extra regularization was not added on smaller subsets so as to assess the intrinsic mannequin properties (and never the impact of regularization) [3]. Fig. 7 exhibits that ViT fashions overfit greater than ResNets on smaller datasets. Knowledge exhibits that ResNets carry out higher with smaller pre-training datasets however plateau earlier than ViT; which then outperforms the previous with bigger pre-training. The authors conclude that on smaller datasets, convolutional inductive biases play a key position in CNN mannequin efficiency, which ViT fashions lack. Nonetheless, with giant sufficient knowledge, studying related patterns instantly outweighs inductive biases, whereby ViT excels [3].

Lastly, the authors analyzed the fashions’ switch efficiency from JFT-300M vs complete pre-training compute sources allotted, throughout completely different architectures, as proven in Fig. 8. Right here, we see that Vision Transformers outperform ResNets with the identical computational finances throughout the board. ViT makes use of roughly 2-4 instances much less compute to realize comparable efficiency as ResNet [3]. Implementing a hybrid mannequin does enhance efficiency on smaller mannequin sizes, however the discrepancy vanishes for bigger fashions, which the authors discover shocking because the preliminary speculation is that the convolutional native function processing ought to be capable to help ViT no matter compute dimension [3].

4.1 What does the ViT mannequin be taught?

So as to perceive how ViT processes picture knowledge, it is very important analyze its inner representations. In Half 3, we noticed that the enter patches generated from the picture are fed right into a linear embedding layer that tasks the 16×16 patch right into a decrease dimensional vector house, and its ensuing embedded representations are then appended with positional embeddings. Fig. 9 exhibits that the mannequin certainly learns to encode the relative place of every patch within the picture. The authors used cosine similarity between the realized positional embeddings throughout patches [3]. Excessive cosine similarity values emerge on comparable relative space throughout the place embedding matrix equivalent to the patch; i.e., the highest proper patch (row 1, col 7) has a corresponding excessive cosine similarity worth (yellow pixels) on the top-right space of the place embedding matrix [3].

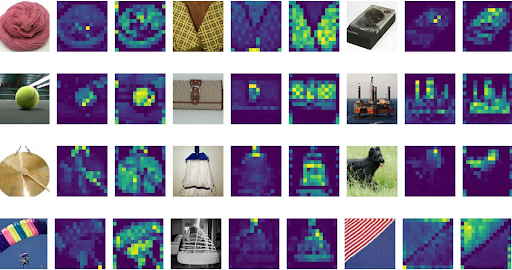

In the meantime, Fig. 10 (left) exhibits the highest principal parts of realized embedding filters which might be utilized to the uncooked picture patches previous to the addition of the positional embeddings. What’s fascinating for me is how comparable that is to the realized hidden layer representations that you simply get from Convolutional neural networks, an instance of which is proven in the identical determine (proper) utilizing the AlexNet structure.

The primary layer of filters from AlexNet (proper) [6].

By design, the self-attention mechanism ought to permit ViT to combine data throughout the complete picture, even on the lowest layer, successfully giving ViTs a worldwide receptive area at the beginning. We will one way or the other see this impact in Fig. 10 the place the realized embedding filters captured decrease stage options like strains and grids, in addition to greater stage patterns combining strains and coloration blobs. This in distinction with CNNs whose receptive area dimension on the lowest layer may be very small (as a result of native utility of the convolution operation solely attends to the world outlined by the filter dimension), and solely widens in direction of the deeper convolutions as additional purposes of convolutions extract context from the mixed data extracted from decrease layers. The authors additional examined this by measuring the consideration distance which is computed from the “common distance within the picture house throughout which data is built-in primarily based on the eye weights [3].” The outcomes are proven in Fig. 11.

From the determine, we will see that even at very low layers of the community, some heads attend to a lot of the picture already (as indicated by knowledge factors with excessive imply consideration distance worth at decrease values of community depth); thus proving the flexibility of the ViT mannequin to combine picture data globally, even on the lowest layers.

Lastly, the authors additionally calculated the eye maps from the output token to the enter house utilizing Consideration Rollout by averaging the eye weights of the ViT-L/16 throughout all heads after which recursively multiplying the load matrices of all layers. This leads to a pleasant visualization of what the output layer attends to previous to classification, proven in Fig. 12 [3].

5. So, is ViT the way forward for Pc Imaginative and prescient?

The Imaginative and prescient Transformer (ViT) launched by Dosovitskiy et. al. within the analysis research showcased on this paper is a groundbreaking structure for laptop imaginative and prescient duties. In contrast to earlier strategies that introduce image-specific biases, ViT treats a picture as a sequence of patches and course of it utilizing a typical Transformer encoder, equivalent to how Transformers are utilized in NLP. This easy but scalable technique, mixed with pre-training on in depth datasets, has yielded spectacular outcomes as mentioned in Half 4. The Imaginative and prescient Transformer (ViT) both matches or surpasses the state-of-the-art on quite a few picture classification datasets (Fig. 6, 7, and eight), all whereas sustaining cost-effectiveness in pre-training [3].

Nonetheless, like in any expertise, it has its limitations. First, so as to carry out nicely, ViTs require a really great amount of coaching knowledge that not everybody has entry to within the required scale, particularly when in comparison with conventional CNNs. The authors of the paper used the JFT-300M dataset, which is a limited-access dataset managed by Google [7]. The dominant method to get round that is to make use of the mannequin pre-trained on the big dataset, after which fine-tune it to smaller (downstream) duties. Nonetheless, second, there are nonetheless only a few pre-trained ViT fashions out there as in comparison with the out there pre-trained CNN fashions, which limits the provision of switch studying advantages for these smaller, way more particular laptop imaginative and prescient duties. Third, by design, ViTs course of pictures as sequences of tokens (mentioned in Half 3), which implies they don’t naturally seize spatial data [3]. Whereas including positional embeddings do assist treatment this lack of spatial context, ViTs might not carry out in addition to CNNs in picture localization duties, given CNNs convolutional layers which might be wonderful at capturing these spatial relationships.

Transferring ahead, the authors point out the necessity to additional research scaling ViTs for different laptop imaginative and prescient duties equivalent to picture detection and segmentation, in addition to different coaching strategies like self-supervised pre-training [3]. Future analysis might concentrate on making ViTs extra environment friendly and scalable, equivalent to growing smaller and extra light-weight ViT architectures that may nonetheless ship the identical aggressive efficiency. Moreover, offering higher accessibility by creating and sharing a wider vary of pre-trained ViT fashions for varied duties and domains can additional facilitate the event of this expertise sooner or later.

References

- N. Pogeant, “Transformers - the NLP revolution,” Medium, https://medium.com/mlearning-ai/transformers-the-nlp-revolution-5c3b6123cfb4 (accessed Sep. 23, 2023).

- A. Vaswani, et. al. “Consideration is all you want.” NIPS 2017.

- A. Dosovitskiy, et. al. “An Picture is Value 16×16 Phrases: Transformers for Picture Recognition at Scale,” ICLR 2021.

- X. Wang, G. Chen, G. Qian, P. Gao, X.-Y. Wei, Y. Wang, Y. Tian, and W. Gao, “Massive-scale multi-modal pre-trained fashions: A complete survey,” Machine Intelligence Analysis, vol. 20, no. 4, pp. 447–482, 2023, doi: 10.1007/s11633-022-1410-8.

- H. Wang, “Addressing Syntax-Based mostly Semantic Complementation: Incorporating Entity and Tender Dependency Constraints into Metonymy Decision”, Scientific Determine on ResearchGate. Out there from: https://www.researchgate.web/determine/Consideration-matrix-visualization-a-weights-in-BERT-Encoding-Unit-Entity-BERT-b_fig5_359215965 [accessed 24 Sep, 2023]

- A. Krizhevsky, et. al. “ImageNet Classification with Deep Convolutional Neural Networks,” NIPS 2012.

- C. Solar, et. al. “Revisiting Unreasonable Effectiveness of Knowledge in Deep Studying Period,” Google Analysis, ICCV 2017.

* ChatGPT, used sparingly to rephrase sure paragraphs for higher grammar and extra concise explanations. All concepts within the report belong to me except in any other case indicated. Chat Reference: https://chat.openai.com/share/165501fe-d06d-424b-97e0-c26a81893c69