and Drawback

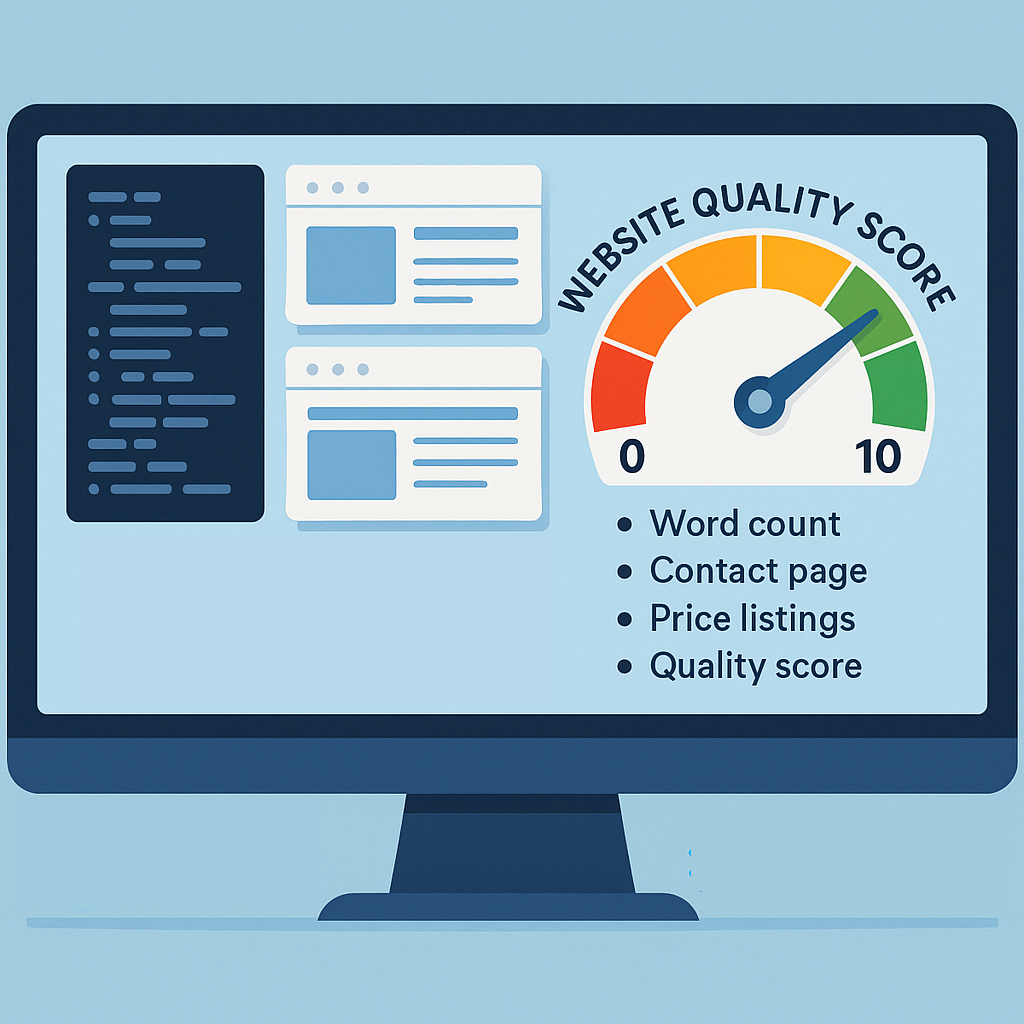

Think about you’re observing a database containing hundreds of retailers throughout a number of nations, every with its personal web site. Your objective? Establish the highest candidates to associate with in a brand new enterprise proposal. Manually searching every web site is unattainable at scale, so that you want an automatic technique to gauge “how good” every service provider’s on-line presence is. Enter the web site high quality rating: a numeric function (0-10) that captures key points of a web site’s professionalism, content material depth, navigability, and visual product listings with costs. By integrating this rating into your machine studying pipeline, you acquire a strong sign that helps your mannequin distinguish the highest-quality retailers and dramatically enhance choice accuracy.

Desk of Contents

- Introduction and Drawback

- Technical Implementation

- Authorized & Moral Issues

- Getting Began

- Fetch HTML Script in Python

- Assign a High quality Rating Script in Pyspark

- Conclusion

Technical Implementation

Authorized & Moral Issues

Be a very good citizen of the online.

- This scraper solely counts phrases, hyperlinks, pictures, scripts and easy “contact/about/value” flags, it does not extract or retailer any non-public or delicate knowledge.

- Throttle responsibly: use modest concurrency (e.g. CONCURRENT_REQUESTS ≤ 10), insert small pauses between batches, and keep away from hammering the identical area.

- Retention coverage: when you’ve computed your options or scores, purge uncooked HTML inside an affordable window (e.g. after 7-14 days).

- For very giant runs, or if you happen to plan to share extracted HTML, think about reaching out to web site house owners for permission or notifying them of your utilization.

Getting Began

Right here’s your folder construction when you clone the repository https://github.com/lucasbraga461/feat-eng-websites/ :

Code block 1. GitHub repository folder construction

├── src

│ ├── helpers

│ │ └── snowflake_data_fetch.py

│ ├── p1_fetch_html_from_websites.py

│ └── process_data

│ ├── s1_gather_initial_table.sql

│ └── s2_create_table_with_website_feature.sql

├── notebooks

│ └── ps_website_quality_score.ipynb

├── knowledge

│ └── websites_initial_table.csv

├── README.md

├── necessities.txt

└── venv

└── .gitignore

└── .envYour dataset must be ideally in Snowflake, right here’s to offer an thought on how it is best to put together it, in case it comes from totally different tables, confer with src/process_data/s1_gather_initial_table.sql, right here’s a snippet of it:

Code block 2. s1_gather_initial_table.sql

CREATE OR REPLACE TABLE DATABASE.SCHEMA.WEBSITES_INITIAL_TABLE AS

(

SELECT

DISTINCT COUNTRY, WEBSITE_URL

FROM DATABASE.SCHEMA.COUNTRY_ARG_DATASET

WHERE WEBSITE_URL IS NOT NULL

) UNION ALL (

SELECT

DISTINCT COUNTRY, WEBSITE_URL

FROM DATABASE.SCHEMA.COUNTRY_BRA_DATASET

WHERE WEBSITE_URL IS NOT NULL

) UNION ALL (

[...]

SELECT

DISTINCT COUNTRY, WEBSITE_URL

FROM DATABASE.SCHEMA.COUNTRY_JAM_DATASET

WHERE WEBSITE_URL IS NOT NULL

)

;Right here’s what this preliminary desk ought to seem like:

Fetch HTML Script in Python

Having the information prepared, that is the way you name it, let’s say you’ve your knowledge in Snowflake:

Code block 3. p1_fetch_html_from_websites.py utilizing Snowflake dataset

cd ~/Doc/GitHub/feat-eng-websites

python3 src/p1_fetch_html_from_websites.py -c BRA --use_snowflake- The python script is anticipating the snowflake desk to be in DATABASE.SCHEMA.WEBSITES_INITIAL_TABLE which will be adjusted on your use case on the code itself.

That may open a window in your browser asking you to authenticate to Snowflake. When you authenticate it, it’ll pull the information from the designated desk and proceed with fetching the web site content material.

When you select to drag this knowledge from a CSV file then don’t use the flag on the finish and name it this fashion:

Code block 4. p1_fetch_html_from_websites.py utilizing CSV dataset

cd ~/Doc/GitHub/feat-eng-websites

python3 src/p1_fetch_html_from_websites.py -c BRAGIF 1. Working p1_fetch_html_from_websites.py

Right here’s why this script is highly effective at fetching web site content material evaluating to a extra primary method, see Desk 1:

Desk 1. Benefits of this Fetch HTML script evaluating with a primary implementation

| Method | Primary Method | This script p1_fetch_html_from_websites.py |

| HTTP fetching | Blocking requests.get() calls one‐by‐one | Async I/O with asyncio + aiohttp to challenge many requests in parallel and overlap community waits |

| Consumer-Agent | Single default UA header for all requests | Rotate by an inventory of actual browser UA strings to evade primary bot‐detection and throttling |

| Batching | Load & course of the complete URL checklist in a single go | Break up into chunks by way of BATCH_SIZE so you’ll be able to checkpoint, restrict reminiscence use, and get well mid-run |

| Retries & Timeouts | Depend on library defaults or crash on sluggish/unresponsive servers | Express MAX_RETRIES and TIMEOUT settings to retry transient failures and certain per-request wait instances |

| Concurrency restrict | Sequential or unbounded parallel calls (risking overload) | CONCURRENT_REQUESTS + aiohttp.TCPConnector + asyncio.Semaphore to throttle max in-flight connections |

| Occasion loop | Single loop reused, can hit “certain to totally different loop” errors when restarting use | Create a contemporary asyncio occasion loop per batch to keep away from loop/semaphore binding errors and guarantee isolation |

It’s usually higher to retailer uncooked HTML in a correct database (Snowflake, BigQuery, Redshift, Postgres, and so on.) slightly than in CSV recordsdata. A single web page’s HTML can simply exceed spreadsheet limits (e.g. Google Sheets caps at 50,000 characters per cell), and managing a whole bunch of pages would bloat and decelerate CSVs. Whereas we embrace a CSV possibility right here for fast demos or minimal setups, giant‐scale scraping and Feature Engineering are way more dependable and performant when run in a scalable knowledge warehouse like Snowflake.

When you run it for say BRA, ARG and JAM that is how your knowledge folder will seem like

Code block 5. Folder construction when you ran it for ARG, BRA and JAM

├── knowledge

│ ├── website_scraped_data_ARG.csv

│ ├── website_scraped_data_BRA.csv

│ ├── website_scraped_data_JAM.csv

│ └── websites_initial_table.csvCheck with Determine 2 to visualise what the output of the primary script generates, i.e. visualize the desk website_scraped_data_BRA. Notice that one of many columns is html_content, which is a really giant subject because it takes the entire HTML content material of the web site.

Assign a High quality Rating Script in Pyspark

As a result of every web page’s HTML will be huge, and also you’ll have a whole bunch or hundreds of pages, you’ll be able to’t effectively course of or retailer all that uncooked textual content in flat recordsdata. As an alternative, we hand off to Spark by way of Snowpark (Snowflake’s Pyspark engine) for scalable function extraction. See notebooks/ps_website_quality_score.ipynb for a ready-to-run instance: simply choose the Python kernel in Snowflake and import the built-in Snowpark libraries to spin up your Spark session (see Code Block 6).

Code block 6. Folder construction when you ran it for ARG, BRA and JAM

import pandas as pd

from bs4 import BeautifulSoup

import re

from tqdm import tqdm

import Snowflake.snowpark as snowpark

from snowflake.snowpark.features import col, lit, udf

from snowflake.snowpark.context import get_active_session

session = get_active_session()Every market speaks its personal language and follows totally different conventions, so we bundle all these guidelines right into a easy country-specific config. For every nation we outline the contact/about key phrases and value‐sample regexes that sign a “good” service provider web site, then level the script on the corresponding Snowflake enter and output tables. This makes the function extractor absolutely data-driven, reusing the identical code for each area with only a change of config.

Code block 7. Config file

country_configs = {

"ARG": {

"title": "Argentina",

"contact_keywords": ["contacto", "contáctenos", "observaciones"],

"about_keywords": ["acerca de", "sobre nosotros", "quiénes somos"],

"price_patterns": [r'ARSs?d+', r'$s?d+', r'd+.d{2}s?$'],

"input_table": "DATABASE.SCHEMA.WEBSITE_SCRAPED_DATA_ARG",

"output_table": "DATABASE.SCHEMA.WEBSITE_QUALITY_SCORES_ARG"

},

"BRA": {

"title": "Brazil",

"contact_keywords": ["contato", "fale conosco", "entre em contato"],

"about_keywords": ["sobre", "quem somos", "informações"],

"price_patterns": [r'R$s?d+', r'd+.d{2}s?R$'],

"input_table": "DATABASE.SCHEMA.WEBSITE_SCRAPED_DATA_BRA",

"output_table": "DATABASE.SCHEMA.WEBSITE_QUALITY_SCORES_BRA"

},

[...]Earlier than we are able to register and use our Python scraper logic inside Snowflake, we first create a stage, a persistent storage space, by operating the DDL in Code Block 8. This creates a named location @STAGE_WEBSITES below your DATABASE.SCHEMA, the place we’ll add the UDF bundle (together with dependencies like BeautifulSoup and lxml). As soon as the stage exists, we deploy the extract_features_udf there, making it accessible to any Snowflake session for HTML parsing and have extraction. Lastly, we set the country_code variable to kick off the pipeline for a selected nation, earlier than looping by different nation codes as wanted.

Code block 8. Create a stage folder to maintain the UDFs created

-- CREATE STAGE DATABASE.SCHEMA.STAGE_WEBSITES;

country_code = "BRA"Now at this a part of the code, confer with Code block 9, we’ll outline the UDF perform ‘extract_features_udf’ that can extract data from the HTML content material, right here’s what this a part of the code does:

- Defines the Snowpark UDF known as ‘extract_features_udf’ that lives within the Snowflake stage folder beforehand created

- Parses the uncooked HTML with BeautifulSoup well-known library

- Extract textual content options:

- Whole phrase rely

- Web page title size

- Flags for ‘contact’ and ‘about’ pages.

- Extracts structural options:

- Variety of hyperlinks

- Variety of

pictures

- Variety of

- Detects product listings by on the lookout for any value sample within the textual content

- Returns a dictionary of all these counts/flags, or zeros if the HTML was empty or any error occurred

Code block 9. Operate to extract HTML content material

@udf(title="extract_features_udf",

is_permanent=True,

substitute=True,

stage_location="@STAGE_WEBSITES",

packages=["beautifulsoup4", "lxml"])

def extract_features(html_content: str, CONTACT_KEYWORDS: str, ABOUT_KEYWORDS: str, PRICE_PATTERNS: checklist) -> dict:

"""Extracts textual content, construction, and product-related options from HTML."""

if not html_content:

return {

"word_count": 0, "title_length": 0, "has_contact_page": 0,

"has_about_page": 0, "num_links": 0, "num_images": 0,

"num_scripts": 0, "has_price_listings": 0

}

attempt:

soup = BeautifulSoup(html_content, 'lxml')

# soup = BeautifulSoup(html_content[:MAX_HTML_SIZE], 'lxml')

# Textual content Options

textual content = soup.get_text(" ", strip=True)

word_count = len(textual content.cut up())

title = soup.title.string.strip() if soup.title and soup.title.string else ""

has_contact = bool(re.search(CONTACT_KEYWORDS, textual content, re.I))

has_about = bool(re.search(ABOUT_KEYWORDS, textual content, re.I))

# Structural Options

num_links = len(soup.find_all("a"))

num_images = len(soup.find_all("img"))

num_scripts = len(soup.find_all("script"))

# Product Listings Detection

# price_patterns = [r'€s?d+', r'd+.d{2}s?€', r'$s?d+', r'd+.d{2}s?$']

has_price = any(re.search(sample, textual content, re.I) for sample in PRICE_PATTERNS)

return {

"word_count": word_count, "title_length": len(title), "has_contact_page": int(has_contact),

"has_about_page": int(has_about), "num_links": num_links, "num_images": num_images,

"num_scripts": num_scripts, "has_price_listings": int(has_price)

}

besides Exception:

return {"word_count": 0, "title_length": 0, "has_contact_page": 0,

"has_about_page": 0, "num_links": 0, "num_images": 0,

"num_scripts": 0, "has_price_listings": 0}And the ultimate a part of the pyspark pocket book Course of and generate output desk does 4 most important issues:

First it applies the UDF extract_features_udf on the uncooked HTML, producing a single options column that holds a small dict of counts/flags for every web page, see code block 10.

Code block 10. Applies UDF to every row

df_processed = df.with_column(

"options",

extract_features(col("HTML_CONTENT"))

)Secondly, it turns every key within the options dict into its personal column within the DataFrame (so that you get separate word_count, num_links, and so on.), see code block 11.

Code block 11. Explode that dictionary into actual columns

df_final = df_processed.choose(

col("WEBSITE"),

col("options")["word_count"].alias("word_count"),

...,

col("options")["has_price_listings"].alias("has_price_listings")

)Thirdly, based mostly on enterprise guidelines outlined by me, it builds a 0-10 rating by assigning factors for every function (e.g. word-count thresholds, presence of contact/about pages, product listings), see code block 12.

Code block 12. Compute a single high quality rating

df_final = df_final.with_column(

"quality_score",

( (col("word_count") > 300).solid("int")*2

+ (col("word_count") > 100).solid("int")

+ (col("title_length") > 10).solid("int")

+ col("has_contact_page")

+ col("has_about_page")

+ (col("num_links") > 10).solid("int")

+ (col("num_images") > 5).solid("int")

+ col("has_price_listings")*3

)

)And eventually it writes the ultimate desk again into Snowflake (changing any present desk) so you’ll be able to question or be a part of these high quality scores later.

Code block 13. Write the output desk to Snowflake

df_final.write.mode("overwrite").save_as_table(OUTPUT_TABLE)

Conclusion

As soon as computed and saved, the web site high quality rating turns into an easy enter to just about any predictive mannequin, whether or not you’re coaching a logistic regression, random forest, or deep neural community. As considered one of your strongest options, it quantifies a service provider’s on-line maturity and reliability, complementing different knowledge like gross sales quantity or buyer critiques. By combining this web-derived sign together with your present metrics, you’ll be capable of rank, filter, and advocate companions way more successfully, and in the end drive higher enterprise outcomes.

GitHub repository implementation:

Disclaimer

The screenshots and figures on this article (e.g. of Snowflake question outcomes) have been created by the creator. Not one of the numbers are drawn from actual enterprise knowledge however have been manually generated for illustrative functions. Likewise, all SQL scripts are handcrafted examples; they’re not extracted from any reside setting however are designed to carefully resemble what an organization utilizing Snowflake may encounter.